|

10/7/2019 Pycharm Docker For Mac

此内容还未提供中文版,以下为英文版 Frequently asked questions (FAQ) 预计阅读时间: 11 分钟 Looking for popular FAQs on Docker for Mac? Check out the for knowledge base articles, FAQs, technical support for various subscription levels, and more. Stable and Edge channels Q: How do I get the Stable or Edge version of Docker for Mac? A: Use the download links for the channels given in the topic. This topic also has more information about the two channels.

And i am running Pycharm with docker remote interpreter. Everything works fine until the code gets to the rendering part where it crashes. Assuming you're on OSX: Your problem is probably not related with pycharm but more with how docker tries to connect to x11.

Q: What is the difference between the Stable and Edge versions of Docker for Mac? A: Two different download channels are available for Docker for Mac:. The Stable channel provides a general availability release-ready installer for a fully baked and tested, more reliable app.

The Stable version of Docker for Mac comes with the latest released version of Docker Engine. The release schedule is synched with Docker Engine releases and hotfixes. On the Stable channel, you can select whether to send usage statistics and other data. The Edge channel provides an installer with new features we are working on, but is not necessarily fully tested. It comes with the experimental version of Docker Engine.

Bugs, crashes, and issues are more likely to occur with the Edge app, but you get a chance to preview new functionality, experiment, and provide feedback as the apps evolve. Releases are typically more frequent than for Stable, often one or more per month. Usage statistics and crash reports are sent by default. You do not have the option to disable this on the Edge channel. Q: Can I switch back and forth between Stable and Edge versions of Docker for Mac? A: Yes, you can switch between versions to try out the Edge releases to see what’s new, then go back to Stable for other work.

However, you can have only one app installed at a time. Switching back and forth between Stable and Edge apps can destabilize your development environment, particularly in cases where you switch from a newer (Edge) channel to older (Stable). For example, containers created with a newer Edge version of Docker for Mac may not work after you switch back to Stable because they may have been created leveraging Edge features that aren’t in Stable yet. Just keep this in mind as you create and work with Edge containers, perhaps in the spirit of a playground space where you are prepared to troubleshoot or start over. To safely switch between Edge and Stable versions be sure to save images and export the containers you need, then uninstall the current version before installing another. The workflow is described in more detail below. Do the following each time:.

Use docker save to save any images you want to keep. (See in the Docker Engine command line reference.). Use docker export to export containers you want to keep. (See in the Docker Engine command line reference.).

Uninstall the current app (whether Stable or Edge). Install a different version of the app (Stable or Edge). What is Docker.app? Docker.app is Docker for Mac, a bundle of Docker client, and Docker Engine.

Docker.app uses the macOS Hypervisor.framework (part of macOS 10.10 Yosemite and higher) to run containers, meaning that no separate VirtualBox is required. What kind of feedback are we looking for? Everything is fair game. We’d like your impressions on the download-install process, startup, functionality available, the GUI, usefulness of the app, command line integration, and so on. Tell us about problems, what you like, or functionality you’d like to see added.

We are especially interested in getting feedback on the new swarm mode described in. A good place to start is the. What if I have problems or questions? You can find the list of frequent issues in. If you do not find a solution in Troubleshooting, browse issues on or create a new one. You can also create new issues based on diagnostics. To learn more, see.

Provides discussion threads as well, and you can create discussion topics there, but we recommend using the GitHub issues over the forums for better tracking and response. How can I opt out of sending my usage data?

If you do not want auto-send of usage data, use the Stable channel. For more information, see (“What is the difference between the Stable and Edge versions of Docker for Mac?”). Can I use Docker for Mac with new swarm mode? Yes, you can use Docker for Mac to test single-node features of introduced with Docker Engine 1.12, including initializing a swarm with a single node, creating services, and scaling services.

Docker “Moby” on Hyperkit will serve as the single swarm node. You can also use Docker Machine, which comes with Docker for Mac, to create and experiment a multi-node swarm. Check out the tutorial at.

How do I connect to the remote Docker Engine API? You might need to provide the location of the Engine API for Docker clients and development tools.

On Docker for Mac, clients can connect to the Docker Engine through a Unix socket: unix:///var/run/docker.sock. See also and Docker for Mac forums topic.

If you are working with applications like that expect settings for DOCKERHOST and DOCKERCERTPATH environment variables, specify these to connect to Docker instances through Unix sockets. Export DOCKERHOST =unix:///var/run/docker.sock How do I connect from a container to a service on the host? The Mac has a changing IP address (or none if you have no network access). Our current recommendation is to attach an unused IP to the lo0 interface on the Mac so that containers can connect to this address. For a full explanation and examples, see under in the Networking topic. How do I connect to a container from the Mac?

Our current recommendation is to publish a port, or to connect from another container. Note that this is what you have to do even on Linux if the container is on an overlay network, not a bridge network, as these are not routed. For a full explanation and examples, see under in the Networking topic. How do I add custom CA certificates? Starting with Docker for Mac Beta 27 and Stable 1.12.3, all trusted certificate authorities (CAs) (root or intermediate) are supported.

For full information on adding server and client side certs, see in the Getting Started topic. How do I add client certificates? Starting with Docker for Mac 17.06.0-ce, you do not have to push your certificates with git commands anymore. You can put your client certificates in /.docker/certs.d/:/client.cert and /.docker/certs.d/:/client.key. For full information on adding server and client side certs, see in the Getting Started topic. How do I reduce the size of Docker.qcow2? By default Docker for Mac stores containers and images in a file /Library/Containers/com.docker.docker/Data/com.docker.driver.amd64-linux/Docker.qcow2.

This file grows on-demand up to a default maximum file size of 64GiB. In Docker 1.12 the only way to free space on the host is to delete this file and restart the app.

Unfortunately this removes all images and containers. In Docker 1.13 there is preliminary support for “TRIM” to non-destructively free space on the host. First free space within the Docker.qcow2 by removing unneeded containers and images with the following commands:. docker ps -a: list all containers.

docker image ls: list all images. docker system prune: (new in 1.13): deletes all stopped containers, all volumes not used by at least one container, and all images without at least one referring container.

Note the Docker.qcow2 will not shrink in size immediately. In 1.13 a background cron job runs fstrim every 15 minutes.

If the space needs to be reclaimed sooner, run this command.

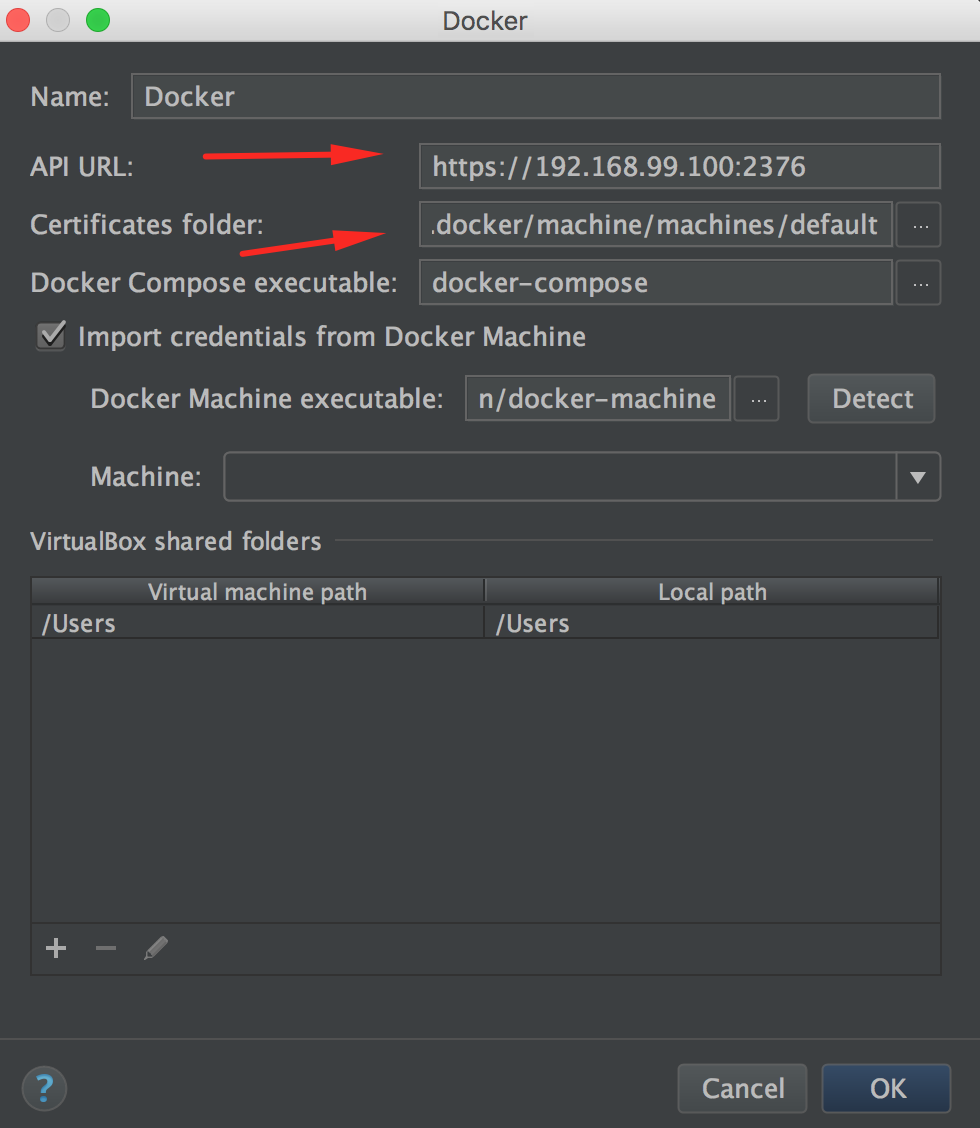

#tensorflow #docker #pycharm My Deep Learning Dev Environment Tensorflow + Docker + PyCharm + OSX Fuse + Tensorboard I've spent quite some time at this stage trying out different things while setting up my deep learning development environment. So I thought I would document my current workflow in case it could help anyone trying to do the same thing. Objectives Before starting building my models, I had a few clear objectives in mind with respect to the development environment I would ideally be using. # Start your docker-machine docker-machine start default # Pull the latest Tensorflow CPU Docker image docker pull gcr.io/tensorflow/tensorflow:latest Once you've pulled the desired docker image, lets set up your Pycharm Project Interpreter. In Pycharm's go to Preferences Project Interpreter Add Remote. Select the Docker configuration followed by the docker-machine instance currently running on your local machine (more than likely default). Once it has connected to your docker-machine, you should see the Tensorflow docker image you just pulled within the list of images available.

Once this is setup, you're ready to go as far as Pycharm is concerned. Daily Routine Procedure On your local machine Mount remote folders: The first thing you want to do is make sure you can access the scripts you will be working with within your local machine. Hence the first thing you need to do is to mount the home/myusername and optionally also the data DeepLearningMachine folders on your Mac using OS X Fuse. You'll probably want to make some aliases for all of these commands by the way. They do tend to be quite long. # Mount your Remote Home folder sshfs -o uid=$(id -u) -o gid=$(id -g) [email protected]:/home/myusername/ /LocalDevFolder/MountedRemoteHomeFolder # Mount your Remote Data folder (optional) sshfs -o uid=$(id -u) -o gid=$(id -g) [email protected]:/data/myusername/ /LocalDevFolder/MountedRemoteDataFolder Here uid and gid are used to map user and group ids between your local and remote machines as these may differ.

Start Docker on your local machine: Next, we want to make sure Pycharm will have access to the right libraries to compile our code locally. To do this simply start a docker machine on your local machine.

If you haven't changed anything from your setup, Tensorflow's CPU Docker image should already be within your local docker environment. Docker-machine start default Open Pycharm and select your project located in the home folder you're just after mounting. Go to that projects Project Interpreter preferences and within the list of project interpreter available, select the remote Tensorflow interpreter you previously created. Once you have it selected, Pycharm should be able to properly compile your code without any complaints. At this stage, you're ready to play around with your code and change anything you wish. On your remote machine Ok, so you've updated your code in Pycharm with a cool new feature and you'd like to train/test your model.

SSH in your machine: The first thing you need to do simply SSH in your DeepLearningMachine. Ssh [email protected] Run a SLURM job: Before going any further, make sure no one else within your team is currently running a job. This could prevent your own job from getting the resources it needs, so it's always good practice just to check out what jobs are currently running on the remote machine. To do this using SLURM, simply run the squeue command which will list whatever jobs are currently running on the machine.

If, for any reason, one of your previous job is still running, you can cancel it using the scancel command. There's no other job running? You're ready to go. So let's start a new job then. You can do this using the following command.

Srun -pty -share -ntasks=1 -cpus-per-task=9 -mem=300G -gres=gpu:15 bash The srun command gives you quite a lot of options to specify what resources are needed for a specific job. In this example, cpus-per-task, mem and gres options let you specify the number of CPUs, overall memory and GPUs needed respectively for this job.

The pty option simply provides a prettier command line interface. Start Nvidia Docker: Now that you've got resources allocated for your job, start a docker container to run your code in the right environment. Instead of using regular docker, here we'll use NVIDIA-Docker to take full advantage of all our GPUs.

Also, make sure you run Tensorflow's GPU docker image version here and NOT the docker CPU version to take full advantage of your hardware. Don't forget to mount your project folder within the container using the - v option. Once you're inside that container, simply run your code using regular Python commands. # Start your container nvidia-docker run -v /home/myusername/MyDeepLearningProject:/src -it -p 8888:8888 gcr.io/tensorflow/tensorflow:latest-gpu /bin/bash # Don't forget to move to your source folder cd src # Run your model python myDLmodel.py On your local machine Start Tensorboard visualisation: You're nearly there. So your code is now running smoothly and you'd like to see how are all of your model's variables doing in real time using Tensorboard.

This is really the easiest part. First, make sure you know what IP address corresponds to your local docker machine. You can do that using the following command.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed